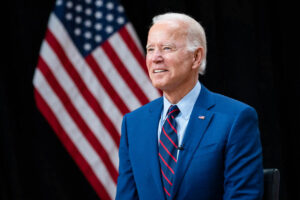

WASHINGTON – The Biden administration said on Tuesday it was taking the first step toward writing key standards and guidance for the safe deployment of generative artificial intelligence and how to test and safeguard systems.

The Commerce Department’s National Institute of Standards and Technology (NIST) said it was seeking public input by Feb. 2 for conducting key testing crucial to ensuring the safety of AI systems.

Commerce Secretary Gina Raimondo said the effort was prompted by President Joe Biden’s October executive order on AI and aimed at developing “industry standards around AI safety, security, and trust that will enable America to continue leading the world in the responsible development and use of this rapidly evolving technology.”

The agency is developing guidelines for evaluating AI, facilitating development of standards and provide testing environments for evaluating AI systems. The request seeks input from AI companies and the public on generative AI risk management and reducing risks of AI-generated misinformation.

Generative AI – which can create text, photos and videos in response to open-ended prompts – in recent months has spurred excitement as well as fears it could make some jobs obsolete, upend elections and potentially overpower humans and catastrophic effects.

Biden’s order directed agencies to set standards for that testing and address related chemical, biological, radiological, nuclear, and cybersecurity risks.

NIST is working on setting guidelines for testing, including where so-called “red-teaming” would be most beneficial for AI risk assessment and management and setting best practices for doing so.

External red-teaming has been used for years in cybersecurity to identify new risks, with the term referring to U.S. Cold War simulations where the enemy was termed the “red team.”

In August, the first-ever U.S. public assessment “red-teaming” event was held during a major cybersecurity conference and organized by AI Village, SeedAI, Humane Intelligence.

Thousands of participants tried to see if they “could make the systems produce undesirable outputs or otherwise fail, with the goal of better understanding the risks that these systems present,” the White House said.

The event “demonstrated how external red-teaming can be an effective tool to identify novel AI risks,” it added. — Reuters